Digital symptom trackers have been under a lot of scrutiny the last few years — criticised for not being accurate enough and for claiming to be more effective than they are.

But according to a new study, there is one which is better than the rest: Ada Health.

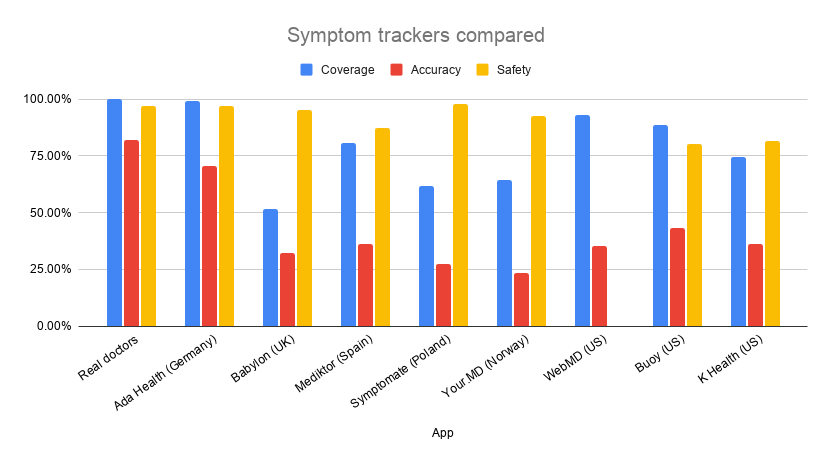

Admittedly, the study was commissioned and co-authored by Ada Health. But it shows a huge gulf between itself and the nearest rivals Babylon, Mediktor, Symptomate and Your.MD when it comes to accuracy.

Symptom checkers work by asking users a series of questions to “find out what’s going on” after filling in basic personal, medication and health information. So for example someone might say they are out of breath and have a rash and want to know what might be wrong.

In the case of Ada, its app reasons with the users’ answers much like a doctor would, using the information to figure out which other questions to ask to cover off all scenarios.

For patients the app’s ‘advice’ (it is emphatically not a diagnosis) aims to help them make better health decisions (whether to go to a doctor or not, for example); for doctors it is a tool to help them make better decisions.

Doctors are still better than AI

In the study published Wednesday in BMJ Open, the doctors managed to accurately diagnose the patient in 82% of the cases whilst Ada Health’s symptom tracker scored 71%. This chimes with the notion that real doctors still get better results than the diagnostics tool using AI to assess symptoms.

But what was notable was that Ada Health did get pretty close.

The other European trackers that were assessed in this study were UK-based Babylon Health, Spanish Mediktor, Polish Symptomate and Norwegian-founded Healthily (Your MD).

Each app was tested with 200 clinical vignettes – think of a doctor doing the rounds in a hospital-based television series: “53-year-old male, suffering from pain in left arm and with a previous heart condition”.

The symptom checkers would then get scores depending on their coverage of patients, the accuracy of the diagnostics tool and its safety.

Whilst all the European apps scored 87% or higher on safety (e.g not telling you to rest at home whilst you are having a heart attack), neither of them got close to the accuracy and coverage of Ada, which is noted by one of the authors to the report, the digital health expert and consultant to Ada Health, Paul Wicks.

He compares it to a study published in 2015.

“In general, the sort of coverage and safety and accuracy that they found five years ago was poorer all over the board but also highly variable. And there's still a lot of variability between them,” Wicks tells Sifted.

Bad coverage

A bad score on coverage means that there are key patient groups excluded by the app. For example, the apps may not include children, pregnant women or those with mental health conditions — groups that could benefit from

The symptom tracker of Babylon Health, which is widely used within the NHS and overseas, only scored 52% on coverage and 32% on accuracy. According to Dr Keith Grimes, clinical digital health director at Babylon Health, due to the fact that Babylon does not include conditions for some groups like children and pregnant women, the scores are marked down.

“The number on accuracy is based on the “required-answer” approach, as in that we got marked down for providing no answer. So if a vignette is tested against our app but is from a group that we would provide triage for but not a condition, for example paediatrics, that essentially marks us down in terms of the accuracy,” Grimes tells Sifted.

The Spanish AI-tool Mediktor had a high score on coverage (81%) and also scored higher in accuracy than Babylon.

Your.MD, now called Healthily, got the worst scores when it came to accuracy. Only 24% of diagnostics were correct. However, Healthily has openly stated that Healthily shouldn’t be used in this way to begin with.

“We don’t use AI to give people a diagnosis,” Healthily’s chief executive Matteo Berlucchi told Sifted in October.

“You shouldn’t really even bring up a potential diagnosis because it’s too dangerous. And you don’t have enough data to be credible, to be honest. But what you can do very well with this technology is to say whether you should see a doctor or not.”

Data is key

So why is Ada Health doing so well in comparison to the rest?

According to Wicks, the startup has an advantage being founded by doctors as well as having a great number of users to continuously make the tool better with the use of artificial intelligence.

“Ada has come out very well in this study. But this is a continually evolving field so we anticipate that all of these providers will constantly be working on their algorithms and all in all, improving, but also perhaps choosing to specialise and differentiate themselves.”

“The more data you have, and the more feedback you can get from your users can become an incentive to continue improving your system,” Wicks adds.

Ada has now over 10m users worldwide and has so far done over 20m assessments with the symptom tracker.

With the scores of Ada being top of the class, it is worth mentioning that the study is also closely linked to the team at the startup.

Limited test cases

The study was developed by members of Ada’s medical and scientific teams alongside independent clinicians and academics and is partially a follow-on study from one published in 2015 with similar vignettes.

The vignettes, the short description of symptoms and patient that a doctor would use, had a range of respiratory conditions, arthritis pain, mental health issues like anxiety and sleep disturbances as well as heart conditions and cancer. But with only 200 vignettes tested on each app, is that enough to have a trustworthy result?

“I think for us what we wanted to ensure was that the vignettes covered a good spread of male and female of the different types of conditions that we see in a health system like the NHS,” Wicks says.

In comparison to the most recent study, Babylon Health published a study in Nature Communications in August with 1671 clinical vignettes and with a better result when it comes to accuracy. In that study, the digital health startup actually did slightly better than real doctors, scoring one percentage point above – 72.5% vs 71.4%.

If you compare just one, you get quite a narrow view of the broader space

However, this technology is not yet available to the public. But according to Grimes, when looking at the study published in the BMJ Open, when a condition has been offered by the app, the scores are similar in both reports.

“If you look at the vignettes where a condition has been provided there's a no significant difference between us and the best performing app”, says Grimes.

The Babylon Health study is not one that Wicks has read, however, he is keen to see more comparative reports in this field.

“My hope is that we'll see more studies like this that are comparing apples to apples because if you compare just one you get quite a narrow view of the broader space.”

“But my hope is that the methods become more consistent so that everybody takes more of a similar approach. In that way we can make sure these comparisons continue to be useful and relevant,” Wicks adds.

Mimi Billing is Sifted’s Nordic correspondent. She also covers healthtech, and tweets from @MimiBilling

[Correction: In a previous version of this article, the number of vignettes used in the study was said to be 50 — the right number is 200]