For over a year now — a long time in the era of GenAI — AI “agents” have been the next big thing, right around the corner.

Mainstream excitement around these tools — which promise to optimise powerful large models to carry out a set of tasks or jobs on their own, rather than just answer queries from humans one by one — kicked off with the release of AutoGPT by Scotland-based developer Toran Bruce Richards in March 2023 to a fanfare of posts on AI Twitter.

But, as with many developments in the world of GenAI, the delivery of genuinely useful results or products didn’t swiftly follow, and it seemed like most people quickly forgot about making new things with tools like AutoGPT.

Now, a new report from Amsterdam-HQed software developer and investor Prosus Group suggests that these AI agents are beginning to become useful in some business contexts, albeit with some fairly serious limitations.

Narrowing down

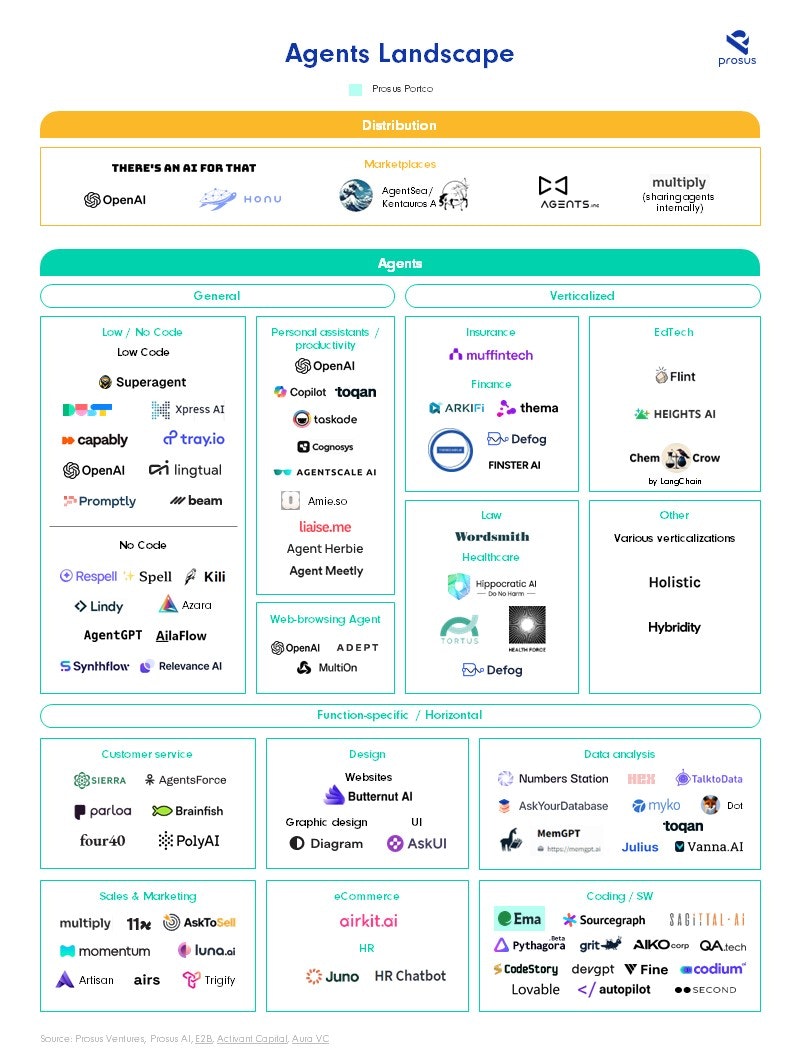

The report, published today, analysed 94 companies building AI agents and distribution platforms for them, and found that the tools can be broken down into three main subsectors. These include those that focus on: “general tasks” like workplace productivity; “function-specific” agents that perform a certain job like sales development representatives; and “industry-specific” agents, which aim to automate various tasks across a given profession.

Paul van der Boor, senior director of data science at Prosus Group, tells Sifted that it’s likely to be function-specific agents that win over the market first.

“You are training them or telling them to do things that are fairly well-defined like you would for a job description,” he says. “Our experience is that that's where there will be a lot of excitement because that's where you can get them to work pretty well.”

One such example of a function-specific agent startup that appears to be gaining traction is London-based 11x, which develops “digital workers” — AI agents that the company says can do the job of a sales development representative (SDR).

In January, founder and CEO Hasan Sukkar told Sifted that its digital workers can outperform human benchmarks when it comes to successfully converting a lead into a meeting.

“It's not actually difficult to be better than the average worker,” he says. “The early feedback from customers is that this has tremendous ROI. One of our customers, they're using [it] at scale, they had 10 people running their SDR function in a way that the system is doing single-handedly.”

Limitations

But Sukkar says that it’s still hard to get 11x’s AI agents outperforming the very best humans, pointing to one of the big limitations of the technology.

Large language models (LLMs) like ChatGPT are essentially statistical predictors of what the next word in a sentence should be, making them inherently unpredictable and unreliable in terms of delivering consistent responses and results.

“You need to be able to basically manage the behaviour of these agents, which often still tends to be non-deterministic,” explains van der Boor. “If you ask a question, you want them to reliably answer that question in the same way if you ask that same question 10 times.”

He adds that the other big thing holding back AI agents today are issues around the accuracy of GenAI models which are known to regularly make mistakes: “lots of use cases require 100% accuracy, or more than 99%.”

There’s also the issue of price. GenAI models are very data and energy intensive to run, meaning that costs can go up quickly if lots of requests are being made to the model.

“We see the cost coming down very, very rapidly, but I think we'd still need to go down a lot more, especially for the scale that we see businesses operating at,” says van der Boor. “That needs to become much, much cheaper to be viable economically.”

11x’s Sukkar told Sifted in January that some of its more advanced models cost as much as $12 per hour to run, which is more than the minimum wage in many countries.

The next act

Despite all of these limitations, van der Boor says agents will be the “next act of GenAI”, and adds that Prosus has been working with a number of its portfolio companies — including edtech company Udemy and delivery platforms Glovo and iFood — to develop a tool for data analysis.

It lets workers at these companies who don’t have technical expertise in coding languages for databases like SQL to ask the AI questions about things like customer behaviour in natural language. Then the agent goes off and works out how to find that information, and the best way to present an answer.

“This agent can come in, take that query, and basically fire off a bunch of actions and try and figure out things like, ‘How do I answer this? Which tables do I need to look at? What's the SQL query I might want to run? Let me critique the code I wrote, then let me run it. And let me validate the answer,’” van der Boor explains.

He says that his development team has improved the reliability and the accuracy of the agent by focusing on technology to help the AI critique its own work, as well as by focusing on the quality of metadata it’s working with.

Van der Boor believes that as more tech is developed to improve the memory and planning abilities of large AI models, agents will only get more capable about understanding the “business rules” that govern their behaviour. This could allow for more generalised tools in the future that are adaptable across a broad range of workplace tasks.

For now, he says it’s “still very early days” — and that a future where AI will replace whole job functions beyond very junior roles is still a long way off.